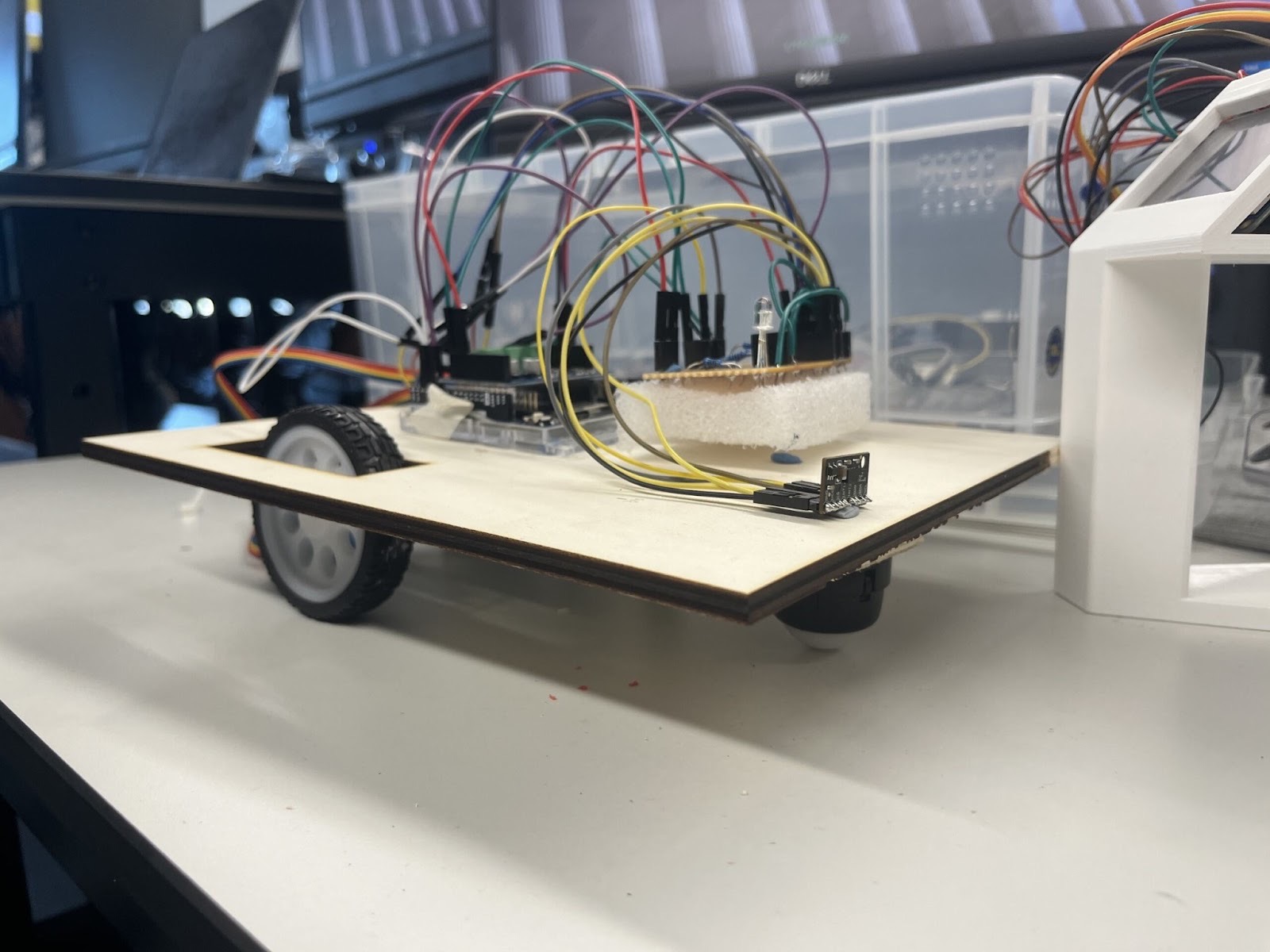

Designed and built a semi-autonomous differential-drive mobile robot with gesture-based wireless control, integrating multi-sensor fusion, real-time localization, and intuitive human-machine interfaces for educational and light patrol applications.

Code: https://github.com/MightyBrushwagg/SweepR

Project Vision:

Developed SweepR as a cost-effective alternative to commercial platforms like Pioneer 3-DX and TurtleBot, combining intuitive motion-based control (inspired by the Wii Remote) with autonomous navigation capabilities. The system leverages wheel odometry and accelerometer-based state detection for self-localization and collision awareness.

Technical Implementation:

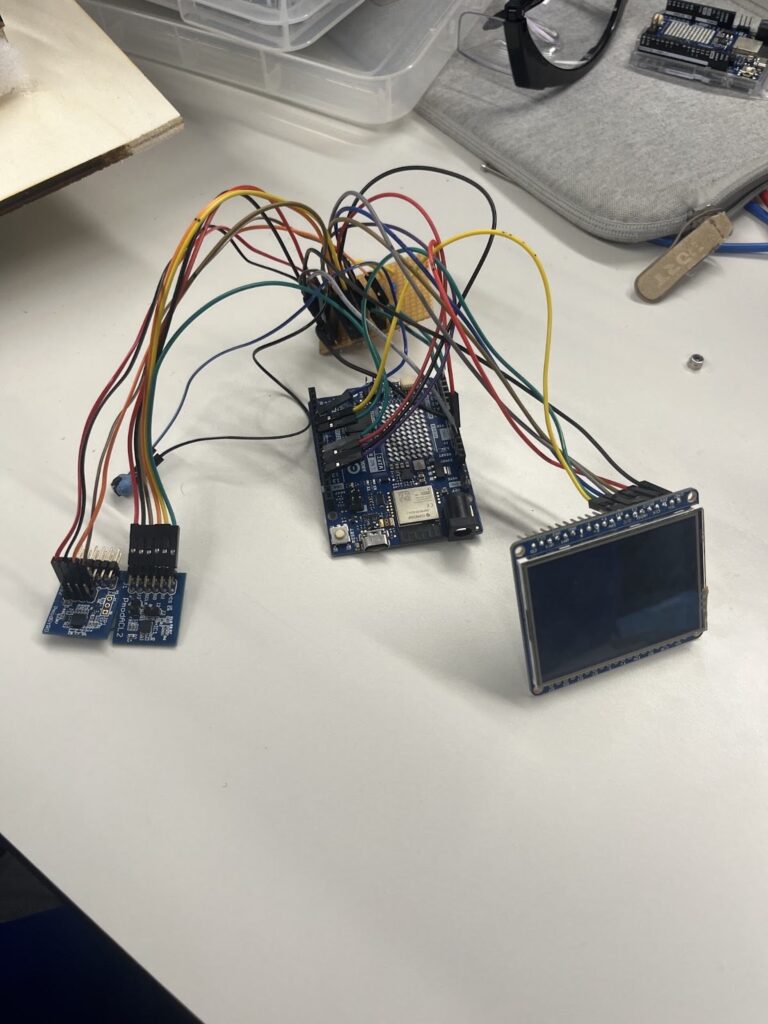

- Wireless Control System: Engineered a custom handheld controller using Arduino Uno R4 with WiFi UDP communication, integrating Pmod ACL2 accelerometer and Pmod GYRO gyroscope for 6-DOF motion tracking

- Sensor Fusion Algorithm: Implemented complementary filtering between accelerometer gravity vectors and integrated gyroscope data to enable tilt-based directional control (pitch for speed, roll for steering), with dynamic mode-switching triggered by gesture detection

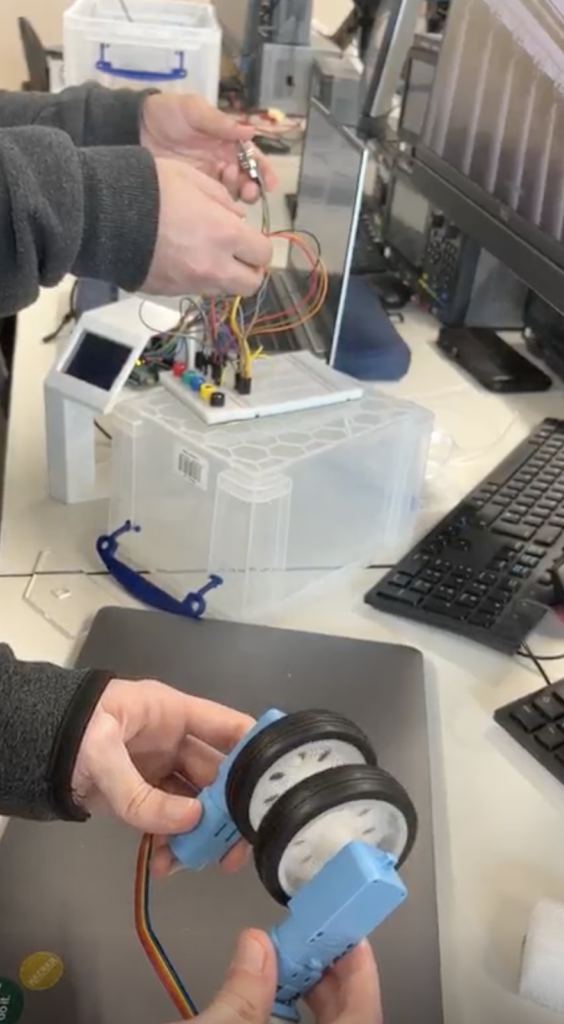

- Differential Drive Control: Programmed independent motor speed control using Pololu Motor Shield, translating sensor fusion outputs into precise differential wheel velocities for smooth curved trajectories

- Real-Time Odometry: Developed encoder-based position tracking system using interrupt-driven encoder readings, calculating displacement through arc approximation and updating global position coordinates (X, Y, θ) at 240Hz

- State Monitoring: Integrated ADXL345 accelerometer for collision detection and orientation awareness, distinguishing between normal operation, bumps, tilts, and overturned states through acceleration threshold analysis

System Architecture:

Built modular dual-microcontroller architecture with dedicated controller and body units communicating over WiFi. Implemented comprehensive I2C protocol with pull-up resistors for multi-sensor integration, SPI for TFT display communication, and PWM for motor/servo control. Designed custom PCB layouts on perforated boards with organized power rails for reliable signal integrity.

Hardware & Mechanical Design:

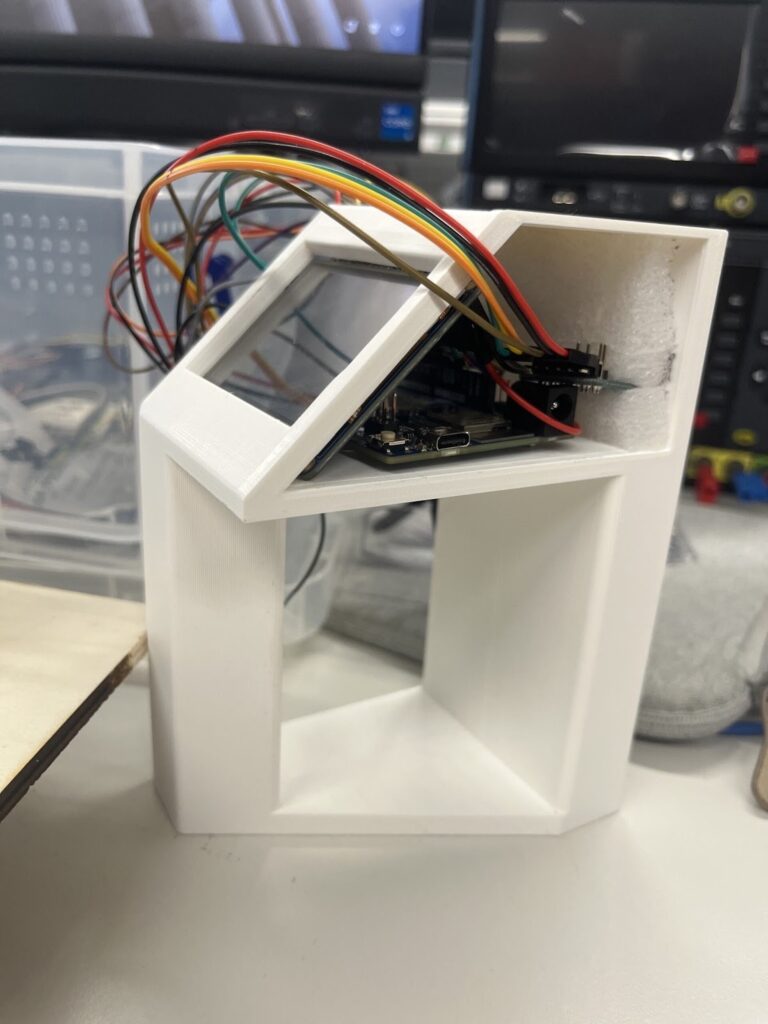

- Created 3D-printed ergonomic controller housing with integrated sensor mounting and acrylic side panels

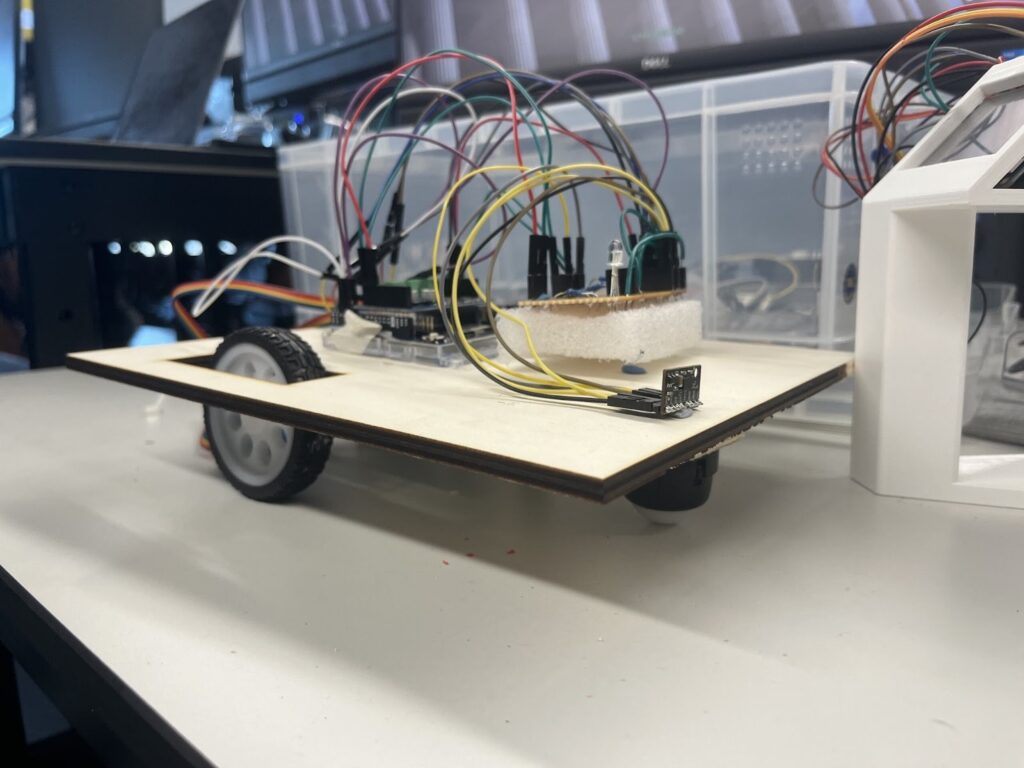

- Fabricated laser-cut plywood chassis with zip-tie motor mounts and modified caster ball assembly

- Designed visual feedback system using RGB LEDs (state indication) and piezo buzzer (playing six retro game themes during normal operation, alerting with 2kHz tone during anomalies)

- Integrated 2.4″ TFT display for real-time trajectory visualization with interactive position reset functionality

Key Results:

Successfully demonstrated wireless gesture-based robot control with real-time position tracking displayed on TFT screen. Achieved reliable tilt detection (>30° threshold), smooth differential turning, and accurate collision/orientation state classification. System validated core odometry algorithms and sensor fusion techniques despite extended I2C debugging challenges.

Engineering Challenges:

Overcame critical I2C communication failures through implementation of 1.5kΩ pull-up resistors after 10+ days of troubleshooting. Adapted motor shield integration strategy when encoder interrupt pins proved insufficient. Resolved SPI bus contention between TFT display and Pmod accelerometer through chip-select multiplexing.

Technologies: Arduino (C++), I2C/SPI/UART protocols, sensor fusion, differential drive kinematics, odometry, PWM control, WiFi (UDP), 3D printing, laser cutting, circuit design, embedded systems